Why context engineering was always the job

AI has general knowledge of the universe, not yours. The engineers who get results were always doing context work.

I've spent a lot of this week talking with colleagues about AI: how it can help, how it won't, when to lean in, when to hold back. The conversations have been dense, and the thinking has been spilling over into a burst of writing.

Every time I write about AI and engineering lately, I end up at the same place.

tl;dr

- The value of engineering was never in the syntax; it was in holding enough context to be precise about the right things

- AI makes this obvious: an LLM has general knowledge of the universe, not your context

- The people who get good results from AI were already good at communicating context to humans

- No one has invented mind reading. Fostering context was always the work, and it still is

The value was never the syntax#

Matteo Collina describes software engineering splitting into three tiers, and at every tier, the bottleneck is someone who understands what's being built and why. Addy Osmani names comprehension debt: code that works fine until someone needs to change it and nobody remembers how it's shaped. I've written about context curation, about context engineering, about planning over prompting. Different angles, same thesis: the hard problem was never the code.

The hard problem was always holding enough context to know the right way forward.

The tools were never the destination#

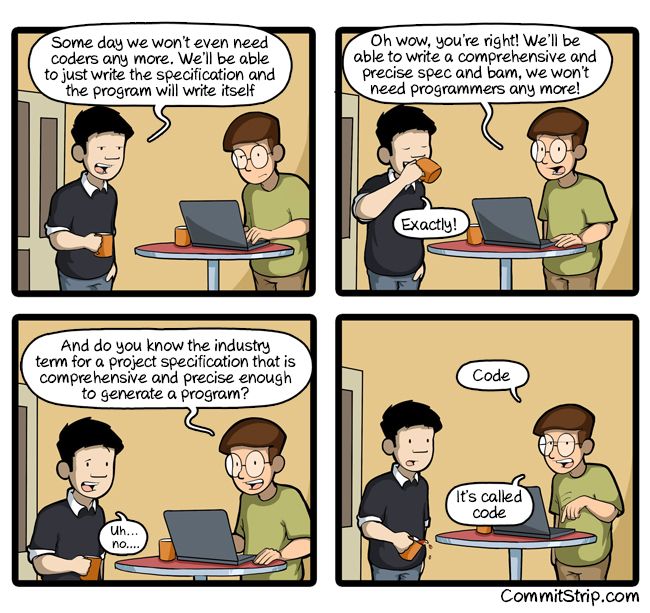

There's a CommitStrip comic from nearly ten years ago where a manager announces that someday they won't need coders — they'll just write a spec and the program will write itself. The developer asks, "Do you know the industry term for a spec that's comprehensive and precise enough to generate a program?" Punchline: "It's called code."

Steve Krouse, who tipped me off to the comic, makes the same argument with more rigour in his recent Reports of code's death are greatly exaggerated. Vague English specs become complex problems at scale. Code is how humans master complexity through abstraction. Dijkstra's line from his 1972 Turing Award lecture holds: "the purpose of abstracting is not to be vague, but to create a new semantic level in which one can be absolutely precise."

The punchline is the thesis. The act of being precise enough to solve a problem is the work; the language is just the medium. PHP, Python, a prompt to an LLM: these are all ways of expressing precision. Kent Beck puts it sharply: AI "deprecates formerly leveraged skills like language expertise while amplifying vision, strategy, task breakdown, and feedback loops." Engineering has changed enormously over the decades, but the cognitive work underneath has not: decomposing an abstract problem into something rigid enough to execute. The true value was never in the syntax.

This was true before AI, too. A new hire without context writes bad code. A contractor without domain knowledge builds the wrong thing. A consultant without organisational awareness recommends something that can't land. The failure mode is always the same: someone technically competent but missing the context of what they're trying to do, how the system is structured, or what the organisation actually needs.

Large language models just made the context gap visible#

An LLM has loads of general human knowledge. But general knowledge is not the context you need.

AI can't read your mind. It doesn't know your team's constraints, your users' actual behaviour, or why that module is shaped the way it is. It has what gets fed into the prompt, nothing more. Feed it bad context, get bad output. This isn't a tool failure. It's a framing failure.

The people who get good results from AI are, in my experience, the ones who were already good at communicating context to humans. They write clear briefs. They think about audience and constraints before starting. They decompose problems into steps. These are strategic project management skills, not prompt engineering skills.

AI just made the cost of poor context transfer instant and visible. Where it used to take weeks for a misaligned contractor to deliver the wrong thing, now an LLM does it in seconds.

I used to say "content is king." I spent years arguing for quality content, building models that connected content to strategy and user actions, watching AI commoditize surface-level reference content until the content itself wasn't the differentiator anymore. What I was actually advocating for was context all along: who the content is for, how it connects, what it enables, why it exists. Content without that is just data. I wrote recently about content architecture as infrastructure, the structure of how content relates across systems, and that's context work too. An LLM can generate content. It can't generate your real context.

Context was always the job#

The work is what it always was: context. Holding it, documenting it, transferring it, verifying it.

But context isn't one job. It's different work at different levels:

- Individual contributors need context to do good work; they consume it

- Senior engineers hold and transfer context across time; they carry it

- Leadership makes context legible, setting the vision, making constraints explicit, pointing the team at the same destination

Context doesn't flow on its own. Someone has to structure it so everyone else can align to it.

This is what makes senior engineers valuable. Collina's point isn't that they type faster. It's that they hold more context. They know why the system is shaped the way it is, what's been tried before, which constraints are real and which are inherited assumptions. That knowledge doesn't live in the code. It lives in people, and when those people leave, the context walks out with them.

It's also what makes comprehension debt dangerous. Osmani's research shows engineers who passively delegate to AI score measurably worse at understanding what they shipped. That's context loss at scale. The tests pass, the metrics are green, and nobody can explain what the system does.

The challenge is the same as it's always been: what's the vision, what's the destination, what are we trying to achieve? Not having that locked in and agreed across the team, not having everybody working in the same direction, is the fatal blow. It was the fatal blow before AI, and it compounds faster now.

When you can produce volumes of code without aligning it back to a coherent destination, a wrong step doesn't stay a wrong step. It turns into fifty wrong steps before anyone notices. Velocity without shared context isn't speed. It's just moving fast in the wrong direction.

The Harvard Business Review made a version of this argument in February:

When every company can use the same AI models, context becomes the competitive advantage. Not the models, not the tooling. The workflows teams actually follow, the signals they respond to, the judgment calls that repeat across real work.

The organisations that treat context as infrastructure — not overhead, not a side effect of seniority, not something that accumulates naturally — are the ones that will still be able to maintain what they build. The rest will ship fast, and eventually hit a wall they can't explain.

Related writing#

- Content architecture: the delivery problem (Mar 2026) — content structure as shared infrastructure

- The ladder was always context (Mar 2026) — digesting Matteo Collina's three-tier split

- I AIn't known what's in my code anymore (Mar 2026) — comprehension debt as context loss

- The power of the pause: How planning beats prompt tuning (Sep 2025) — planning is context work

- Developers are becoming context technical writers for AI (Sep 2025) — the rise of context curation as a discipline

- Context engineering beats prompt tricks for AI agents (Oct 2025) — framing the input is the real skill

- Thoughtful AI integration beats bolted-on Clippy (Sep 2025) — context-first beats feature-first

Further reading on context as an organisational concern#

- Ethan Mollick, Detecting the Secret Cyborgs. When orgs focus AI policy on restrictions, employees use AI privately without sharing knowledge. Management's job is creating conditions for context-sharing, not gatekeeping.

- Charity Majors on observability and relational context. The three-pillars model destroys relational context. Context preservation is an architectural decision, not an individual engineering task.

- Gene Kim & Steven Spear, Wiring the Winning Organization. Organisations win through communication and coordination patterns; their three mechanisms (slowification, simplification, amplification) are about making context legible and actionable.

- Shreyas Doshi on context as organisational literacy. Context is a leadership competency, not just domain knowledge.